The honest answer is a little annoying.

The best AI to code with is not automatically the one that writes the most impressive first draft. It is not always the one that feels fastest in a demo. It is not even always the one with the model you personally like best on a given Tuesday.

The best AI to code with is the one that helps you keep turning code into software.

That distinction matters more than people want it to.

A coding agent can generate a component, fix a bug, write tests, refactor a folder, explain a stack trace, or build a prototype. That is useful. But if you are building something real, the harder question is not "can it write code?"

The harder question is:

Can it stay reliable when the codebase gets weird?

Can it carry your standards forward?

Can it work inside your actual development loop?

Can you constrain it when you need safety?

Can you review what it did without feeling like you are cleaning up after a very confident intern with root access?

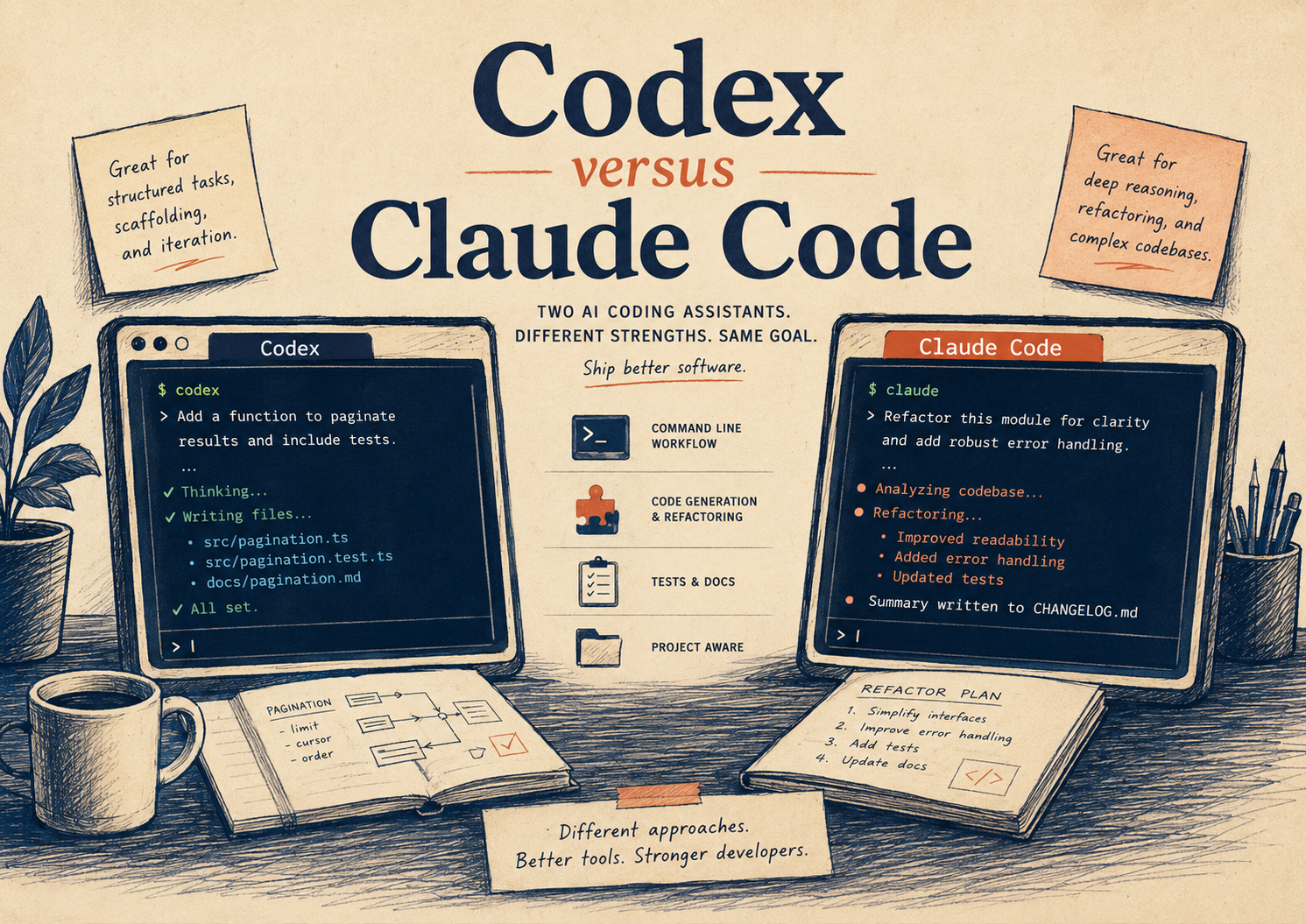

That is where the Codex versus Claude Code question gets interesting.

Quick answer: I personally choose Codex for long-term software work. Claude Code is genuinely excellent, especially if you are terminal-native and like composable command-line workflows. But Codex feels more reliable to me across the full development lifecycle: planning, local work, cloud tasks, durable repo guidance, review, sandboxing, and repeatable verification. That does not mean everyone should choose Codex. It means Codex fits the way I build.

What Codex and Claude Code actually are

Codex and Claude Code are both agentic coding tools.

That means they are not just autocomplete. They can inspect a codebase, reason across files, edit code, run commands, and help move a task from intention to implementation.

OpenAI describes Codex as available across the Codex app, IDE extension, CLI, and cloud. The official docs position it as something you configure over time with durable project guidance, sandbox controls, MCP tools, skills, automations, and review workflows.

Anthropic describes Claude Code as an agentic coding tool available in the terminal, IDE, desktop app, and browser. It can edit files, run commands, work with git, connect to tools through MCP, use CLAUDE.md files, create commits and pull requests, and fit naturally into command-line workflows.

So the shallow comparison is easy.

Both can code.

Both can run commands.

Both can read your project.

Both can use persistent instructions.

Both can integrate with external tools.

Both can help you go much faster than writing everything alone.

The real question is not whether either one is capable. They both are.

The real question is which one behaves better when you stop treating AI coding like a trick and start treating it like part of your engineering system.

The wrong way to compare coding agents

A lot of people compare coding agents by asking one of them to build a todo app, a Snake game, a landing page, or a small bug fix.

That is not useless. First impressions matter. If a tool cannot handle a small, clean task, that tells you something.

But the first task is not where coding agents become valuable.

The real test starts around task fifty.

That is when you have a dirty worktree, a half-remembered architectural decision, one flaky test, three slightly contradictory docs, a production edge case, and a feature that touches frontend, API, storage, telemetry, and deploy behavior.

That is when the agent needs more than intelligence.

It needs shape.

It needs boundaries.

It needs memory that is not just vibes.

It needs to know what done means.

That is the part I care about most.

Why I personally prefer Codex

I use Codex because it fits the way I want software work to feel over time.

Not just faster.

More repeatable.

More inspectable.

More capable of turning mistakes into durable improvements.

The Codex best practices describe the tool less like a one-off assistant and more like a teammate you configure and improve. That framing matches my experience. Codex gets better when you give it durable instructions, clear constraints, repo-specific context, test expectations, and a definition of done.

That matters because serious software work is mostly not "please generate code."

It is more like:

"Here is the existing system. Do not drift from it. Find the source of truth. Trace the callers. Preserve the contract. Add tests that prove allowed and denied behavior. Run the checks. Explain the remaining risk."

Codex handles that style of work well because its workflow is built around more than the current prompt.

There is AGENTS.md for durable repository guidance. There is config.toml for configuration across sessions and surfaces. There are sandbox and approval modes. There are skills for repeatable workflows. There are subagents for bounded exploration. There is review support. There is cloud work for background tasks. There are local app, IDE, and CLI surfaces.

Those pieces add up.

Individually, none of them sound magical. Together, they make Codex feel less like a coding chatbot and more like a development environment that happens to contain an agent.

That is the part I trust.

Where Claude Code is very strong

Claude Code deserves real respect.

If your brain lives in the terminal, Claude Code can feel extremely natural. Anthropic’s docs emphasize its command-line workflow, scripting, piping, git behavior, MCP integrations, hooks, memories, and cross-surface availability. That is a good product thesis.

It meets developers where many of them already are.

You can run claude in a project, pipe logs into it, ask it to review changed files, configure MCP servers, use CLAUDE.md, set permissions, and wire hooks around actions. The official docs also describe Claude Code’s security posture around permissions, read-only defaults, write restrictions, and sandboxed bash behavior in its security documentation.

That is not a toy.

For some people, Claude Code may absolutely be the better tool.

If you want a terminal-first assistant that feels close to your shell, Claude Code is compelling. If your team already works heavily in Anthropic’s ecosystem, it may fit cleanly. If you like the Unix-style idea that tools should compose, pipe, and automate, Claude Code has a very coherent point of view.

I understand why people love it.

I just do not think "great in the terminal" is the same thing as "best long-term coding system for me."

The difference that matters to me

Here is the simplest way I can say it:

Claude Code feels strongest as a powerful coding agent that lives naturally inside developer workflows.

Codex feels strongest as an operating layer for agentic software work.

That is not a universal truth. It is my read after using these tools in the context that matters to me: building Vibecodr.Space, a platform where small mistakes can become system promises if I am not careful.

I do not need an agent that only helps me move fast.

I need an agent that can slow down correctly.

I need it to inspect before acting. I need it to preserve source-of-truth boundaries. I need it to run tests. I need it to notice contradictions. I need it to treat security and UX as part of the same system. I need it to be coachable through files that future sessions will actually read.

Codex’s structure is good for that.

The official Codex docs explicitly push users toward reusable guidance, verification, review, and configuration. They recommend AGENTS.md for project standards, testing and review loops before accepting work, and sandbox plus approval controls for safety. That is very close to how I want AI coding to work.

Not "make me a file."

More like:

"Join the system, learn the rules, do the work, prove the work, and leave the system clearer than you found it."

The security question is not optional

This is where both tools need to be treated seriously.

A coding agent is not a harmless text box once it can run commands, edit files, access secrets, call tools, open browsers, or create pull requests. At that point, it is part of your development environment.

Both Codex and Claude Code have permission models. Both have ways to constrain behavior. Both have documentation around security, configuration, and tool access.

Codex’s agent approvals and security docs describe OS-enforced local sandboxing, approval policies, network controls, and cloud isolation. The docs also note that Codex cloud uses isolated OpenAI-managed containers, with setup and agent phases separated in a way that keeps secrets available during setup and removed before the agent phase.

Claude Code’s settings and security docs describe scoped settings, permission rules, sensitive-file deny rules, managed settings, and strict read-only defaults before additional actions are approved.

Those details matter.

If you are building a toy, you can be looser.

If you are building with user data, payments, private code, production infrastructure, credentials, or anything that can hurt someone when it breaks, you need to care about the permission model as much as the model.

The best coding AI is not the one that says yes the fastest.

Sometimes the best coding AI is the one that has a good reason to stop.

Where Codex wins for me

Codex wins for me in long-running work because it feels better at accumulating a working relationship with the codebase.

The important word is not "memory." The important word is "relationship."

A codebase is not just files. It is decisions. It is old compromises. It is hidden contracts. It is the reason one route looks weird, one test is strict, one cache header is conservative, and one migration cannot be touched casually.

Codex gives me more ways to encode that.

AGENTS.md can describe how the repo works. Config can control how the agent behaves. Skills can turn repeated workflows into something stable. MCP can connect outside systems. Review flows can catch regressions. Cloud tasks can run separately from local tasks. Subagents can explore without making the main thread carry every detail.

That makes Codex feel especially good for platform work.

Not because it never makes mistakes. It does.

Not because it replaces engineering judgment. It absolutely does not.

Because when it makes a mistake, I can often turn that mistake into better instructions, better tests, better workflows, or better guardrails.

That is the loop I care about.

Where Claude Code may win for you

Claude Code may be the better choice if your preferred interface is the terminal and you want the agent to feel like another command-line tool.

Its CLI story is very strong. Its docs lean into piping, scripting, automation, git operations, hooks, MCP, and settings. If that is your center of gravity, it may feel more natural than Codex.

Claude Code may also be a strong fit if your team already standardizes around Anthropic, uses Claude heavily for planning and writing, or wants coding automation that sits close to shell workflows and CI/CD.

That is not a lesser choice.

It is just a different center of gravity.

Some people want their coding agent to feel like a teammate sitting inside a structured development environment.

Some people want it to feel like a superpowered shell companion.

Those are both valid instincts.

What I would choose by use case

If I were building a serious product over months, I would choose Codex first.

If I were working in a large repo where the agent needs durable instructions, strict review loops, repeatable tests, and clear safety boundaries, I would choose Codex first.

If I were doing long-running platform work with lots of cross-system constraints, I would choose Codex first.

If I were deeply terminal-native and wanted to pipe logs, script workflows, and stay inside the shell as much as possible, I would seriously consider Claude Code.

If I were already on an Anthropic-heavy team, I would test Claude Code first before switching ecosystems.

If I were new to AI coding, I would try both on the same real task and pay attention to which one helped me think more clearly, not which one produced the flashier first answer.

That last point is important.

The winner is not always the one that writes more code.

Sometimes the winner is the one that helps you understand the code you are now responsible for.

The best AI to code with is the one you can still review

There is a trap in this whole category.

The better these tools get, the easier it becomes to stop looking carefully.

That is dangerous.

AI-generated code is still code. It can still leak secrets. It can still break auth. It can still ship a race condition. It can still misunderstand your architecture. It can still pass the wrong test. It can still make something that looks right and is wrong in the exact place that matters.

So my real answer is this:

Codex is the best AI coding tool for the way I work right now.

Claude Code may be the best AI coding tool for the way you work.

But whichever one you choose, do not choose the one that makes you feel least responsible.

Choose the one that helps you stay responsible longer.

Choose the one that fits your workflow, your risk, your codebase, your taste, your patience, and your definition of done.

For me, that is Codex.

Not because Claude Code is bad.

Because Codex feels more dependable as the work gets longer, the system gets stranger, and the stakes get more real.

And that is where software actually lives.

Braden

Sources and further reading

OpenAI Codex: Codex Quickstart Codex Best Practices Codex Config Basics Codex Agent Approvals and Security

Claude Code: Claude Code Overview Claude Code Settings Claude Code Security Claude Code Memory Claude Code CLI Reference